Every minute, over 500 hours of video are uploaded to YouTube alone. Streaming quality is moving from HD to 4K to 8K. AR and VR content is no longer experimental.

The challenge isn’t storage. It’s bandwidth and every generation of codecs has had to answer that challenge from scratch. AV1 was the answer to that problem in 2018. AV2 is being built to answer it for the next decade.

What Is the AV2 Video Codec?

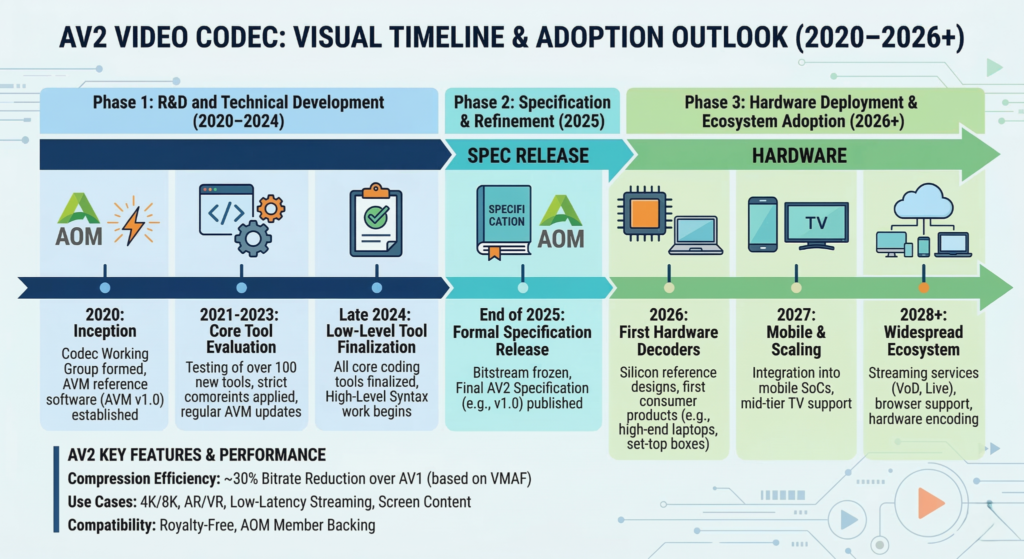

AV2 stands for AOMedia Video 2. It’s the successor to AV1, developed by the Alliance for Open Media (AOMedia), the same consortium responsible for AV1. Development began in 2020, two years after AV1’s release.

Like AV1, it’s open-source and royalty-free which is what separates it commercially from proprietary alternatives like VVC (H.266), where multiple patent pools make licensing costs impractical for large-scale web deployment.

AOMedia’s founding members and contributors include Google, Apple, Netflix, Amazon, Microsoft, Meta, Cisco, and Intel. That level of industry alignment is part of why AV1 got widespread browser and hardware support faster than most codecs in history.

AV2 is expected to follow the same path, on a longer timeline. Something to consider before you read further: AV2 doesn’t have a final specification yet.

What researchers and engineers are currently testing is the AVM (AOM Video Model), an experimental reference implementation. Most benchmarks you’ll find published right now are AVM results, not a finalized AV2 spec.

The difference matters because AVM performance and final AV2 performance won’t be similar.

Why AV2 Was Built?

AV1 was a big jump over VP9 and H.264. But AV1 has real limitations, and they became more obvious as streaming demands grew. Encoding speed is the most painful one.

AV1 is notoriously slow to encode, which makes real-time applications difficult at scale. Hardware encoders have softened that somewhat but the underlying codec architecture still carries overhead.

The second issue is diminishing returns. As streaming platforms push toward 8K, HDR, and 120fps content, the compression gains from AV1 start to plateau. Research from Netflix’s codec team has consistently shown that next-generation content demands next-generation compression.

AV1 was developed during a period when 4K streaming was becoming mainstream, but newer use cases like 8K, HDR, and high frame rates continue to push compression requirements further.

Then there’s the category of content AV1 was never designed for at all. Standard 2D video compression is relatively mature at common quality levels, but efficiency improvements are still actively researched.

What isn’t solved: efficient compression for volumetric video, 360-degree VR content, AR overlays, and split-screen multi-stream scenarios. AV2 research is considering use cases like VR and multi-stream video, though support for these formats is typically handled outside the core codec through metadata and container formats.

How AV2 Improves on AV1

- Compression Efficiency

- Intra and Inter Prediction

- Advanced Transform Coding

- Entropy Coding

Early AVM benchmark results suggest AV2 will reduce bitrate requirements by approximately 30 to 40% compared to AV1 at equivalent perceptual quality.

That number is consistent across multiple independent evaluations, though it varies by content type. High-motion footage and film grain are typically harder to compress than talking-head video.

For context: AV1 already delivers around 30% better compression than VP9. If AV2 hits its targets, a stream that requires 10 Mbps in AV1 would need roughly 6-7 Mbps in AV2.

At the scale Netflix, YouTube, or Amazon Prime operate, that’s a heavy reduction in infrastructure cost. VMAF and PSNR are the primary quality metrics used in AV2 evaluations.

VMAF specifically is a perceptual quality metric that aligns better with how humans actually perceive video quality than raw signal-to-noise measures.

Intra prediction handles compression within a single frame. Inter prediction handles compression across frames by identifying what has changed between them.

Early AVM research introduces more granular and flexible prediction tools compared to AV1. It uses extended block partitioning that allows the encoder to adapt more precisely to different image regions.

Fine detail areas (like faces or text) can use smaller partition sizes. Smooth backgrounds can use larger ones. The result is more efficient bit allocation per frame.

Inter prediction in AV2 also gets improved motion compensation models, allowing better tracking of complex object motion without wasting bits on transition regions.

Transform coding is the mathematical process that converts pixel data into frequency coefficients, which are then compressed.

Current AVM research explores several new tools on top of AV1’s transform set, including:

• IST (Irreversible Secondary Transform): a secondary transform pass to improve compression of residual data after the primary transform

• TCQ (Trellis-Coded Quantization): uses dynamic programming to find more optimal coefficient representations, reducing distortion at a given bitrate

• ATC (Adaptive Transform Coding): lets the encoder to select from a wider set of transform types per block rather than using a fixed set

• CCTX (Cross-Component Transform): exploits correlation between luma (brightness) and chroma (color) channels to reduce redundancy between them

No single tool accounts for the full compression gain. The projected 30 to 40% improvement comes from combinations of these experimental tools across the pipeline.

After transform and quantization, the remaining data is encoded using entropy coding. AV2 builds on AV1’s ANS-based entropy coding with improved context modeling and probability estimation.

Better context models mean the encoder can predict the probability of each symbol more accurately, compressing the output bitstream further.

AV2’s Standout Features

- Extended Recursive Partitioning

- Luma-Chroma Decoupling

- Superior Film Grain Synthesis

- AR, VR, and Volumetric Video Support

- Low-Bitrate Mobile Performance

AVM experiments include a more flexible block partitioning system than AV1. AV1 uses superblocks of 128×128 pixels that subdivide into smaller blocks.

AV2 extends this with additional partition shapes and subdivision options. The practical effect: finer-grained control over where bits are spent within each frame.

Most codecs process luma and chroma together. AVM experiments explore allowing luma and chroma channels to be processed more independently, which means efficient encoding of content with high chroma variation, such as HDR footage with wide color gamut.

This is directly connected to the CCTX transform tool mentioned above. The two work together.

Cinematic content often contains film grain, which is expensive to compress because it’s basically structured noise. AV2 builds on AV1’s Adaptive Film Grain Synthesis (AFGS1) system with a more expressive parameter set.

Instead of encoding grain directly, the encoder strips it, transmits a compact set of parameters, and the decoder synthesizes it on playback. For high-grain content, this can save a meaningful percentage of the bitrate budget.

Standard flat video doesn’t require the codec to know anything about spatial geometry. VR and volumetric content does. Some AVM experiments explore tools that may benefit 360-degree and multi-stream video, though these are not finalized features of the codec.

These use cases are being considered during development, but like previous codecs, much of the implementation will depend on higher-level systems beyond the core codec.

How well these actually perform will depend on the final spec and encoder implementations but the architecture is there in a way it wasn’t with AV1.

One area of focus in AV2 research is improving quality at very low bitrates, specifically sub-1 Mbps streaming for mobile users on constrained connections because there are so few bits to work with that every encoding decision matters more.

AV1 handles this reasonably well. AV2 is being optimized to handle it better, which has direct implications for video accessibility in markets with limited broadband infrastructure.

AV2 vs. AV1 vs. VVC: Direct Comparison

| Feature | AV1 | VVC (H.266) | AV2 |

|---|---|---|---|

| Release year | 2018 | 2020 | Not finalized (mid-late 2020s expected) |

| Compression vs. previous gen | ~30% vs VP9 | ~40-50% vs HEVC | ~30-40% vs AV1 (based on AVM research) |

| Licensing | Royalty-free | Licensed (multiple patent pools) | Royalty-free (expected) |

| Encoding complexity | High | Very high | Extremely high (experimental) |

| Hardware decoder availability | Widespread (2022+) | Very limited | Not yet |

| Browser support | Chrome, Firefox, Safari, Edge | None in major browsers | None yet |

VVC is the closest technical competitor. It was finalized by MPEG in 2020 and delivers comparable compression gains. The problem with VVC for web use is licensing.

Multiple patent pools control VVC rights, and the licensing fees are high enough that Netflix, Google, and Amazon have largely avoided the format. AV2, like AV1, sidesteps that entirely.

Google, Amazon, Netflix, and Microsoft all have commercial incentives to keep web video codecs royalty-free. That’s what keeps AOMedia funded and active. VVC’s licensing structure effectively hands over the web video market to AV1 and eventually AV2 by default.

AV2 Release Timeline

AOMedia began AV2 development in 2020. The specification was targeted for completion around late 2025, though codec standardization timelines routinely slip.

There have been no public updates to the draft spec confirming that deadline has held.

Hardware decoding support typically follows a spec release by 18 to 36 months. When AV1 was finalized in 2018, hardware AV1 decoders barely existed in consumer products.

The first consumer GPUs with hardware AV1 decoders (Intel Arc, NVIDIA RTX 40 series, AMD RX 7000) arrived in 2022 to 2023. Browser software decoding preceded that by a couple of years.

Based on previous codec adoption patterns, a possible timeline could look like:

• AV2 specification: likely mid-to-late 2020s

• Software decoding: shortly after specification

• Hardware decoding: several years later

• Mainstream deployment: toward the end of the decade

These timelines are speculative and depend heavily on industry adoption and hardware support.

Netflix’s Andrey Norkin, one of the engineers most involved in AV2 architecture, presented detailed findings on AV2’s coding tools at QoMEX 2025.

His presentations are among the most technically precise public materials available on AV2’s current state.

Which Industries Will Benefit from AV2?

- Streaming Platforms

- AR and VR Content

- Cloud Gaming

- Live Sports Broadcasting

Netflix, YouTube, and Amazon Prime collectively serve billions of hours of video per month. A 30-40% bitrate reduction at equivalent quality translates directly into reduced CDN costs and the ability to serve higher quality at existing bandwidth levels.

For YouTube specifically, where a significant portion of traffic comes from mobile users on constrained connections, the low-bitrate performance gains are relevant.

Current VR streaming at high quality requires bitrates that exceed what most consumer connections can sustain. AV2’s built-in support for spatial video formats and its compression efficiency at high resolutions makes it better suited to this use case than AV1 or any existing codec.

Whether it’s enough to make VR streaming genuinely practical depends on encoder implementations that don’t exist yet.

Cloud gaming is bandwidth-constrained in a different way from standard video. Latency matters as much as quality. AV2’s improved inter prediction and partition flexibility could allow better quality at lower bitrates without significantly increasing encoding latency compared to AV1.

Software-only AV2 encoding will be too slow for real-time gaming for years.

High-motion, high-detail content like live sports is one of the hardest scenarios for video compression. Fine spatial detail (grass, crowd, jersey textures) combined with fast motion creates encoder stress.

AV2’s adaptive transform selection and extended prediction tools are specifically useful here, though the encoding complexity will be a big headache for live applications until dedicated hardware exists.

Will AV2 Replace AV1?

Probably not in any clean or fast way. AV1 has widespread hardware support. As of 2024, most consumer GPUs, high-end mobile SoCs, and all major browsers support AV1 decoding.

That installed base doesn’t disappear. Streaming platforms won’t transcode their entire libraries to AV2 overnight. The realistic scenario is parallel deployment. New hardware will ship with AV2 decoder support.

Platforms will begin encoding premium or 4K/8K content in AV2 first, where the bandwidth savings justify the encoding cost. Legacy and standard-definition content will stay in AV1 or H.264 for years.

Encoding complexity is a big headache. AV1 encoding is already slow compared to H.264. AV2 encoding is expected to be slower still. Without dedicated hardware encoders, AV2 is impractical for live or near-live applications.

Hardware encoder silicon will follow decoder silicon, but it follows it slowly.

The AV1 transition took roughly five to seven years from spec to mainstream deployment. For AV2, that puts mainstream streaming adoption somewhere around 2030 to 2032.

The Future of Open Video Codecs

AV2 is AOMedia’s current focus, but codec development doesn’t stop at AV2. Neural compression is an active research area. AI-assisted encoding systems that use machine learning models to make compression decisions are already outperforming traditional codecs in constrained academic benchmarks.

Google’s research on neural video compression and work from groups at DeepMind and Meta suggest that learned codecs will eventually produce better compression than any hand-designed transform coding system.

The problem is compute: neural codecs are currently too expensive to run at scale on standard hardware. The inflection point is hardware. As dedicated neural processing units become standard in consumer silicon, the compute cost of learned compression drops.

Some researchers speculate that future codecs may increasingly rely on machine learning approaches, though transform-based codecs like AV2 are still the current industry standard. Whatever follows it will probably look structurally different.

Frequently Asked Questions

What is the AV2 codec?

AV2 (AOMedia Video 2) is the next-generation open video compression format developed by the Alliance for Open Media. It’s the successor to AV1 and aims to deliver 30 to 40% better compression at equivalent quality.

What’s the difference between AV1 and AV2?

AV2 introduces new coding tools including IST, TCQ, ATC, and CCTX transforms, extended block partitioning, improved film grain synthesis, and built-in support for spatial and volumetric video formats. The expected compression improvement is 30 to 40% over AV1 at matching perceptual quality.

Is AV2 released?

No. The specification was targeted for finalization around late 2025 but there has been no updates to the draft specs. Current benchmarks use AVM, an experimental reference model, not a finalized spec.

Is AV2 royalty-free?

Yes. Like AV1, AV2 is being developed under AOMedia’s royalty-free licensing framework.

Will AV2 require new hardware?

Yes, for efficient decoding at scale. Software decoding will be available first, followed by hardware decoder support in GPUs and mobile SoCs, likely 18 to 36 months after the spec is finalized.

What is AV2 used for?

AV2 is designed for high-quality video streaming, AR/VR content, live broadcasting, cloud gaming, and any application where bandwidth efficiency and visual quality need to improve simultaneously.

What is AVM?

AVM stands for AOM Video Model. It’s the experimental reference implementation used to test and develop AV2 coding tools before the final specification is locked. When you read AV2 benchmark results published before 2025, they’re measuring AVM, not a finished codec.